Saturday Mar 14, 2020

Attacks to machine learning model: inferring ownership of training data (Ep. 99)

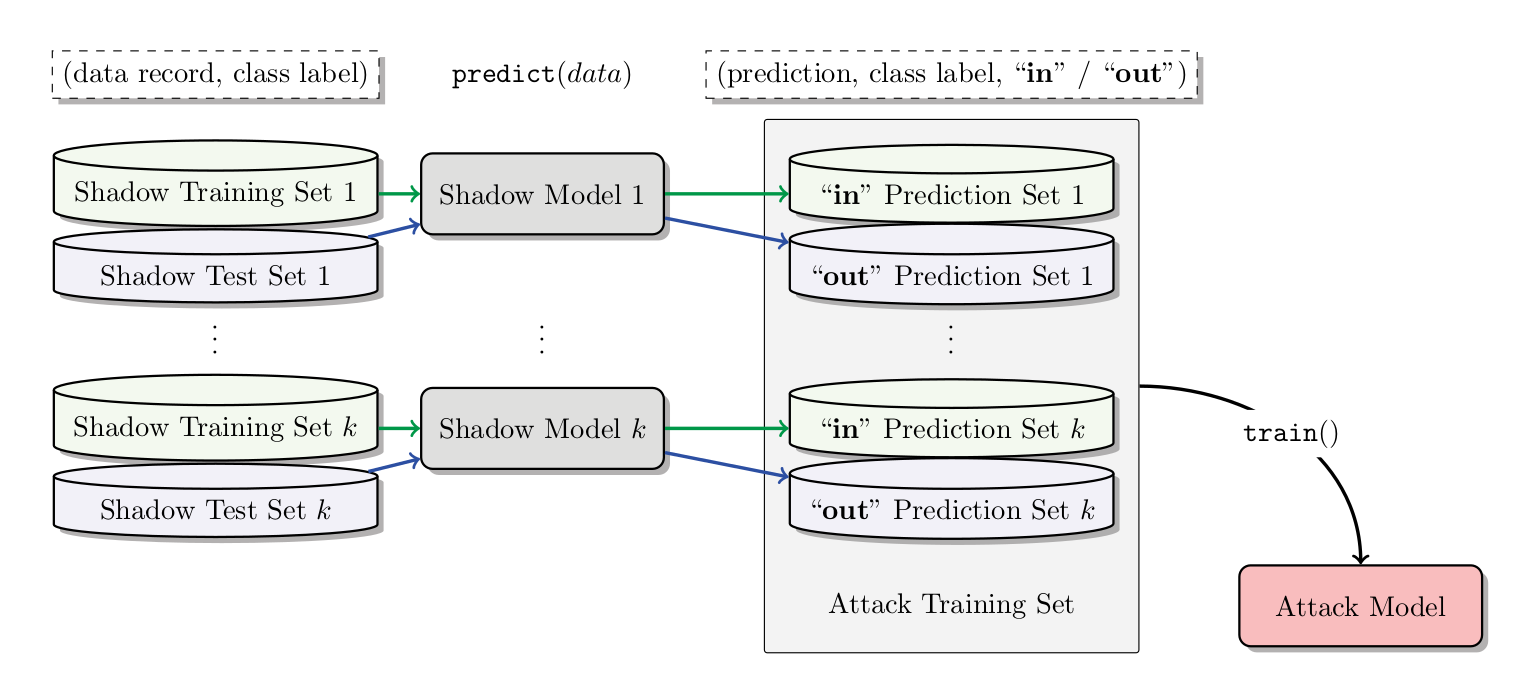

In this episode I explain a very effective technique that allows one to infer the membership of any record at hand to the (private) training dataset used to train the target model. The effectiveness of such technique is due to the fact that it works on black-box models of which there is no access to the data used for training, nor model parameters and hyperparameters. Such a scenario is very realistic and typical of machine learning as a service APIs.

This episode is supported by pryml.io, a platform I am personally working on that enables data sharing without giving up confidentiality.

As promised below is the schema of the attack explained in the episode.

References

Membership Inference Attacks Against Machine Learning Models

No comments yet. Be the first to say something!